Trees usually expose parent links that move upward toward the root, but walking those links one step at a time doesn’t scale well when the structure holds thousands or millions of nodes. Binary lifting builds a table of parent jumps that shortens that walk. This idea rests on two parts. Parent references sit at distances that are powers of two, and each jump can cut down the number of steps needed to reach a target ancestor. This technique works well in Java and matches how interviews and production systems treat repeated ancestor queries.

How Binary Lifting Works

Binary lifting starts with a tree that already has parent links for every node and then stretches those links into longer jumps. Instead of walking upward one edge at a time, this method prepares shortcuts at distances that double each step, so a climb that would have taken many edges turns into a short sequence of jumps. Every jump comes from a table entry that tells you which ancestor sits two steps up, four steps up, eight steps up, and so on, up to a safe limit for the tree size or height. Java fits this style nicely because arrays and integer math keep everything predictable and fast after the table is ready.

Many code bases label nodes from zero or one and store the tree in an adjacency list. That layout gives a stable index for every node, which fits nicely with a table of ancestors. Every row in that table belongs to a single node, and every column belongs to a jump length that doubles from the previous one. Such a regular grid is what turns a slow climb through parents into a walk that grows roughly with the logarithm of the tree size instead of the tree height itself.

Jump Table Structure

Jump tables for binary lifting sit in a two dimensional array. First dimension holds the node id, and the second dimension holds the exponent that picks a power of two. Entry up[v][0] stores the direct parent of node v. Entry up[v][1] stores the ancestor two steps up. Entry up[v][2] stores the ancestor four steps up, and the same idea keeps going for higher powers of two. Root nodes need a special parent value, so code usually sets that parent to the root itself or to a sentinel such as negative one.

Many implementations pick a fixed maxLog value that is large enough for the worst case and then reuse that value across all nodes. One reasonable bound for maxLog comes from the maximum depth of the tree. Depth never exceeds the number of nodes, so a safe upper bound for the exponent is about log base two of the node count plus one. Java can compute that bound at runtime with a short loop.

This allocation step fixes the table layout and guarantees that any future jump length that fits under the tree depth has a column ready to hold it. Every node has entries across all columns, even if some of those entries end up equal to the root marker.

Some trees arrive as parent arrays instead of adjacency lists. That format lines up directly with the base column of a jump table, because the parent array already stores parent[v] for every node. That table layout can reuse that data without extra traversal work.

This build style leaves up[v][0] ready for use, so higher layers only need to combine entries from this base column.

Table Construction

Tree based problems usually provide edges that form an undirected tree. First pass needs to pick a root and then walk outward to assign a parent and a depth to every node. That walk also sets the base column of the jump table. Many developers start with a depth first search because it naturally follows tree edges and matches the recursive way trees are defined.

This traversal assigns a direct parent to every child node and also fills an array of depths that matches that structure. Calling dfsBuild(root, root, graph, up, depth) treats the root as its own parent, which avoids out of range indices in later steps and keeps table entries valid for the whole tree.

Very tall trees can create deep recursion chains, so some code bases prefer an explicit stack. Logic stays similar, but the stack replaces the call stack and keeps control in your hands.

That loop fills the same two arrays without relying on recursion limits. After either variant runs, column zero of the table holds direct parents for all nodes, and the depth array is ready for queries that need level information.

Higher layers of the table reuse the work from column zero. Entry up[v][j] jumps from node v to its 2^j ancestor by chaining two jumps of length 2^(j - 1). That rule means the table can grow column by column, with each column reading from the previous one.

Every new column uses already computed entries and folds two shorter jumps into a longer one. After this pass completes, the jump table contains ancestors at all power of two distances that the tree supports.

Depth Tracking

Depth is a companion to the jump table. Each node carries a depth value that counts edges from the root to that node. Many future queries start by comparing depths, and any move upward in the tree lowers that value. Keeping depth next to the jump table means those queries can line up nodes at the same level before they climb, instead of working with uneven positions.

Depth fits neatly into the initial traversal. Every time the search goes from a parent to a child, it adds one to the depth of the parent and assigns that value to the child. Repeated moves outward from the root naturally store the length of the route for every node.

Depth values from this breadth first pass match the same structure that the jump table expects. Root depth sits at zero. Children have depth one. Nodes further away hold higher depth values that record the length of their way back to the root.

Both depth and jump tables work side by side. Table entries make long jumps possible, and depth values tell future logic how far those jumps need to go. After both structures sit in memory, ancestors can be reached without walking every edge between a node and the root.

Ancestor Queries with Binary Lifting

Generally speaking, ancestor queries sit at the center of many tree problems. Code frequently needs answers to questions such as who is the 7th ancestor of a node or which node is the lowest common ancestor of two positions in the tree. Binary lifting turns those questions into bit operations. Instead of walking from a node up to its parent again and again, ancestor queries break the walk into a sequence of jumps whose lengths are powers of two. Jump tables built earlier provide those jumps, so the query code only needs to read entries and move current node pointers.

Lifting a Node by k Steps

Lifting a node by k steps means moving from a starting node up toward the root through its ancestors while counting exactly k edges. Naive code would loop k times and follow the parent link in each step, which grows too slow for large k or many queries. Binary lifting treats k as a binary number and turns that long walk into a handful of jumps. Every bit in k decides whether to take a jump of a certain length from the jump table.

When k is written in binary, a one in bit position j means the query needs to move 2^j levels upward. Jump tables store exactly those 2^j ancestors in their columns. That match between bit positions and column indices is what gives the lift method its speed.

This function walks through the bits of k from least significant to most significant and checks each bit. As soon as a bit is set, the code jumps from the current node to the ancestor stored in the matching column. The loop stops once all bits are processed or a sentinel parent such as negative one appears, which means the tree root has been passed.

Some code bases prefer to scan through all possible jump lengths rather than shifting k. That style uses the same logic but tends to feel natural when maxLog is already known and stored next to the table.

This version walks through all jump sizes and checks whether k includes that jump by testing the mask (1 << bit). Both variants rely on the same rule. Every column represents a jump length that doubles from the previous one, so any distance k that fits below the tree height can be written as a sum of those jump lengths.

Lowest Common Ancestor through Binary Lifting

LCA or Lowest common ancestor, refers to the deepest node that is an ancestor of two given nodes. Many tree problems in contests and production code need this answer to find distances, merge values on paths, or apply updates across subtrees. Binary lifting gives a way to compute LCA that runs in logarithmic time after the preprocessing step that builds jump tables and depths.

LCA queries run in two phases. First, the deeper node climbs up so that both nodes are at the same depth. Second, both nodes climb in sync while trying to stay as low as possible in the tree. The climb stops at the last level where their ancestors differ, and the parent one step above that point is the lowest common ancestor.

Depth equalization usually uses the same lifting method from the previous subsection, but many implementations inline that logic. That keeps all LCA work in a single method and avoids extra calls.

This helper moves a node upward to match a target depth by walking through the bits of the depth difference. Once a query has this tool, LCA becomes a matter of aligning depths and then jumping in larger steps.

This version brings the deeper node to the same depth as the shallower node, then climbs both in lockstep from the highest jump length down to shorter ones. As long as the two jump pointers differ, both nodes move up by the same jump size. Once all columns have been tested, both nodes sit just below the common ancestor, and the parent link from either node gives the LCA.

Some developers separate the lifting and final parent step into a reusable helper method when LCA calls appear in many parts of a code base. That layout keeps complexity in one place and lets other parts of the code call a short function for ancestor work.

Time and Space

Time and memory use for binary lifting follow a stable pattern as trees grow. Jump tables store an entry for every node and for every jump length, where jump lengths are the powers of two that fit below the maximum depth. Trees with n nodes never need more than floor(log2(n)) + 1 jump lengths. That means the table size grows on the order of n times log n, with each entry being just an integer index into the node array.

Preprocessing has two main costs. A traversal step visits every edge of the tree to fill parents and depths, which runs in linear time. A second pass builds the jump table columns. That pass loops over all nodes for every jump length and runs in n times log n. Those two phases together form the one time cost that prepares the tree for ancestor queries. Query time drops sharply compared to walking parent pointers one by one. Any single ancestor query needs at most one jump for every bit in the distance value or, in the LCA case, one jump per column in the table. That keeps query time on the order of log n, even when trees hold hundreds of thousands or millions of nodes.

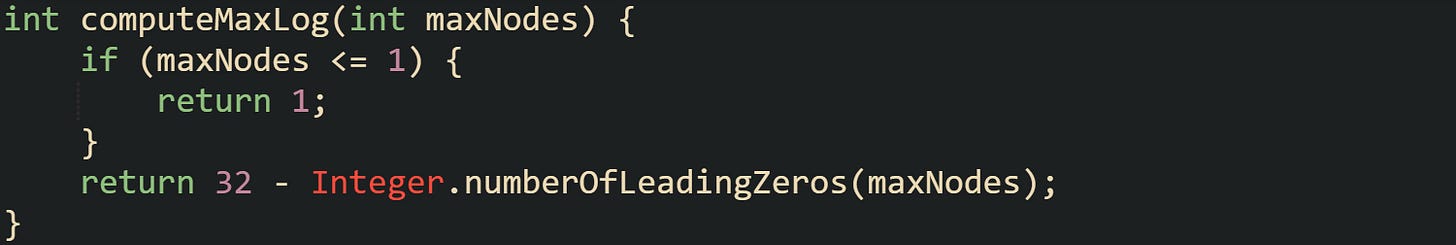

Some implementations pick maxLog from a constant that matches an upper bound on node count. Others compute it from the maximum depth observed during preprocessing. A helper that derives maxLog from a hard limit on n stays very common in contest style code.

This helper finds the largest power of two not greater than maxNodes, counts how many bits are needed to represent that value, and adds one more jump level for safety. That keeps the table tall enough for all ancestor jumps but still compact in memory.

Binary lifting trades a moderate amount of extra storage and preprocessing time for very fast queries. After the tree has a jump table and depth array in place, ancestor and LCA queries stay stable and predictable even when the number of queries grows very large.

Conclusion

Binary lifting turns parent pointers and depth values into a compact jump table that answers ancestor questions with bit operations instead of long walks through the tree. Preprocessing fills that table in n log n time, then each ancestor or LCA query touches only a small number of entries, so cost grows with log n rather than tree height. Java arrays, integer arithmetic, and a fixed column count keep the structure predictable in memory and practical to reuse wherever a rooted tree shows up. Learning to read and build these tables gives you a more concrete way to think about skipping through hierarchies instead of stepping through them edge by edge.

![int n = numberOfNodes; // total nodes in the tree int maxLog = 1; while ((1L << maxLog) <= n) { maxLog++; } int[][] up = new int[n][maxLog]; // up[v][j] will store the 2^j-th ancestor of v int n = numberOfNodes; // total nodes in the tree int maxLog = 1; while ((1L << maxLog) <= n) { maxLog++; } int[][] up = new int[n][maxLog]; // up[v][j] will store the 2^j-th ancestor of v](https://substackcdn.com/image/fetch/$s_!28Td!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fce6ad781-732b-43c4-aa17-ca32ac185a0e_1485x339.png)

![int[] parent = new int[n]; // parent[v] holds the direct parent, root points to itself int[][] up = new int[n][maxLog]; for (int v = 0; v < n; v++) { up[v][0] = parent[v]; } int[] parent = new int[n]; // parent[v] holds the direct parent, root points to itself int[][] up = new int[n][maxLog]; for (int v = 0; v < n; v++) { up[v][0] = parent[v]; }](https://substackcdn.com/image/fetch/$s_!nSvU!,w_2400,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F8d054ee7-64ca-471a-8641-eb4ace82d5f7_1476x209.png)

![void dfsBuild( int v, int parent, List<List<Integer>> graph, int[][] up, int[] depth ) { up[v][0] = parent; for (int nei : graph.get(v)) { if (nei == parent) { continue; } depth[nei] = depth[v] + 1; dfsBuild(nei, v, graph, up, depth); } } void dfsBuild( int v, int parent, List<List<Integer>> graph, int[][] up, int[] depth ) { up[v][0] = parent; for (int nei : graph.get(v)) { if (nei == parent) { continue; } depth[nei] = depth[v] + 1; dfsBuild(nei, v, graph, up, depth); } }](https://substackcdn.com/image/fetch/$s_!-SRj!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fe74ab4b4-8ed7-4aef-b5de-70246eef8c8d_1484x671.png)

![void buildBaseLayer( int root, List<List<Integer>> graph, int[][] up, int[] depth ) { Deque<Integer> stack = new ArrayDeque<>(); stack.push(root); up[root][0] = root; depth[root] = 0; while (!stack.isEmpty()) { int v = stack.pop(); for (int nei : graph.get(v)) { if (nei == up[v][0]) { continue; } up[nei][0] = v; depth[nei] = depth[v] + 1; stack.push(nei); } } } void buildBaseLayer( int root, List<List<Integer>> graph, int[][] up, int[] depth ) { Deque<Integer> stack = new ArrayDeque<>(); stack.push(root); up[root][0] = root; depth[root] = 0; while (!stack.isEmpty()) { int v = stack.pop(); for (int nei : graph.get(v)) { if (nei == up[v][0]) { continue; } up[nei][0] = v; depth[nei] = depth[v] + 1; stack.push(nei); } } }](https://substackcdn.com/image/fetch/$s_!DB0A!,w_2400,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F3e23b188-0de8-47aa-8e73-0793825e7bfd_1552x805.png)

![void buildJumpLayers(int[][] up) { int n = up.length; int maxLog = up[0].length; for (int j = 1; j < maxLog; j++) { for (int v = 0; v < n; v++) { int mid = up[v][j - 1]; up[v][j] = (mid < 0) ? -1 : up[mid][j - 1]; } } } void buildJumpLayers(int[][] up) { int n = up.length; int maxLog = up[0].length; for (int j = 1; j < maxLog; j++) { for (int v = 0; v < n; v++) { int mid = up[v][j - 1]; up[v][j] = (mid < 0) ? -1 : up[mid][j - 1]; } } }](https://substackcdn.com/image/fetch/$s_!Ujvv!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F7860b8b5-1023-4a03-9dd3-4963c4bb97e3_1479x460.png)

![void computeDepths( int root, List<List<Integer>> graph, int[] depth ) { Arrays.fill(depth, -1); Deque<Integer> queue = new ArrayDeque<>(); queue.add(root); depth[root] = 0; while (!queue.isEmpty()) { int v = queue.poll(); for (int nei : graph.get(v)) { if (depth[nei] != -1) { continue; } depth[nei] = depth[v] + 1; queue.add(nei); } } } void computeDepths( int root, List<List<Integer>> graph, int[] depth ) { Arrays.fill(depth, -1); Deque<Integer> queue = new ArrayDeque<>(); queue.add(root); depth[root] = 0; while (!queue.isEmpty()) { int v = queue.poll(); for (int nei : graph.get(v)) { if (depth[nei] != -1) { continue; } depth[nei] = depth[v] + 1; queue.add(nei); } } }](https://substackcdn.com/image/fetch/$s_!AehP!,w_2400,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F0c70a644-6601-4081-9575-4c6cc5acb656_1552x734.png)

![int getKthAncestor(int node, int k, int[][] up) { int level = 0; int maxLog = up[0].length; while (k > 0 && node >= 0 && level < maxLog) { if ((k & 1) == 1) { node = up[node][level]; } k >>= 1; level++; } return node; } int getKthAncestor(int node, int k, int[][] up) { int level = 0; int maxLog = up[0].length; while (k > 0 && node >= 0 && level < maxLog) { if ((k & 1) == 1) { node = up[node][level]; } k >>= 1; level++; } return node; }](https://substackcdn.com/image/fetch/$s_!ouoR!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Ffd235c6f-b107-4800-b480-26169401f8bf_1481x546.png)

![int getKthAncestorWithMask(int node, int k, int[][] up) { int maxLog = up[0].length; for (int bit = 0; bit < maxLog && node >= 0; bit++) { if ((k & (1 << bit)) != 0) { node = up[node][bit]; } } return node; } int getKthAncestorWithMask(int node, int k, int[][] up) { int maxLog = up[0].length; for (int bit = 0; bit < maxLog && node >= 0; bit++) { if ((k & (1 << bit)) != 0) { node = up[node][bit]; } } return node; }](https://substackcdn.com/image/fetch/$s_!q2Yd!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F8c0906cd-5f0c-428f-98cb-5766f1c4e2f5_1481x377.png)

![int liftToDepth(int node, int targetDepth, int[][] up, int[] depth) { int diff = depth[node] - targetDepth; int bit = 0; int maxLog = up[0].length; while (diff > 0 && node >= 0 && bit < maxLog) { if ((diff & 1) == 1) { node = up[node][bit]; } diff >>= 1; bit++; } return node; } int liftToDepth(int node, int targetDepth, int[][] up, int[] depth) { int diff = depth[node] - targetDepth; int bit = 0; int maxLog = up[0].length; while (diff > 0 && node >= 0 && bit < maxLog) { if ((diff & 1) == 1) { node = up[node][bit]; } diff >>= 1; bit++; } return node; }](https://substackcdn.com/image/fetch/$s_!IO5K!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fc5e90891-f4b6-45cc-ba7b-b27482d109ee_1487x585.png)

![int lcaBinaryLifting(int u, int v, int[][] up, int[] depth) { if (depth[u] < depth[v]) { int temp = u; u = v; v = temp; } u = liftToDepth(u, depth[v], up, depth); if (u == v) { return u; } int maxLog = up[0].length; for (int bit = maxLog - 1; bit >= 0; bit--) { if (up[u][bit] != up[v][bit]) { u = up[u][bit]; v = up[v][bit]; } } return up[u][0]; } int lcaBinaryLifting(int u, int v, int[][] up, int[] depth) { if (depth[u] < depth[v]) { int temp = u; u = v; v = temp; } u = liftToDepth(u, depth[v], up, depth); if (u == v) { return u; } int maxLog = up[0].length; for (int bit = maxLog - 1; bit >= 0; bit--) { if (up[u][bit] != up[v][bit]) { u = up[u][bit]; v = up[v][bit]; } } return up[u][0]; }](https://substackcdn.com/image/fetch/$s_!dSUC!,w_2400,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Faa4ca223-084c-4345-bad2-563ad2bc58ec_1551x733.png)